Latest Articles

-

11 Ways to Improve Website UX/UI (Using IP Geolocation & Other Tactics)

The terms UX (user experience) and UI (user interface) are often used interchangeably. They’re so …

-

Using 6 Google Analytics Features to Improve User Experience and Website Metrics

Google Analytics is a fantastic tool because it eliminates the guesswork as to how visitors …

-

How to Create Effective User Flows in Sketch (3 Simple Steps)

A user flow is a visual representation of the different paths a user can take …

-

7 Steps to Creating a Spectacular UX Case Study

In this tutorial, I’m going to walk you through how to create an impactful UX …

-

17 Must-Read Books for Product and UX Designers

There are plenty of design books out there, such as the classics Don’t Make Me …

-

Make These Changes to Meet Web Design Accessibility Standards

Accessibility is a key function of any web design or app strategy. New government accessibility …

-

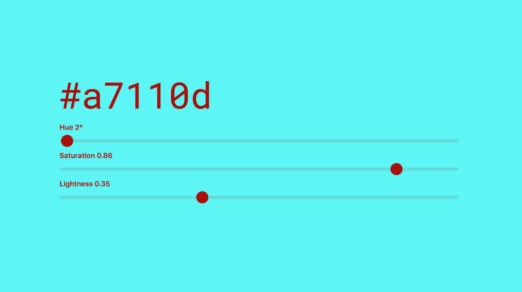

Use Color Accessibility Tools to Improve Your Website Design

Did you know that more than 4 percent of the population is color blind? Different …

-

Improving the UX with Userstack API

Userstack is a REST API, designed by apilayer, the developer behind amazing tools such as …

-

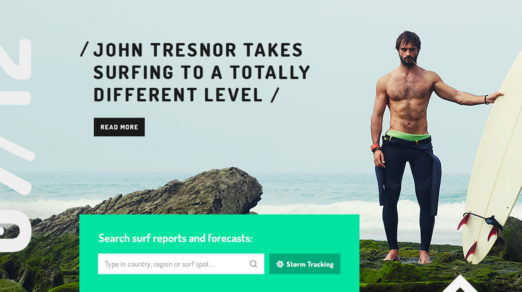

UX Best Practices for Using Search on Your Website

When a website contains a lot of content, your viewers are often not sure how …

-

A Beginner’s Guide to Voice UX Prototyping

As voice technology becomes more and more prominent, we’ll need to design more and more …

-

UX Design and GDPR: Everything You Need to Know

The internet is where we spend a lot of our time, whether working, studying or …

-

What in the World Are Microinteractions?

Microinteractions are all around us. Simply put, it’s a specific moment of interaction with a …

-

How to Improve Customer Loyalty through User Experience

When you establish a bond of trust between a company and its customers, you forge …

-

How to Improve the UX of Your e-Commerce Website Visitors

One of the main parts of a high-level website is undoubtedly a great user experience. …

-

The Design Side Of Conversion Rate Optimization

Conversions drive the web but most designers don’t think like this. Whenever you write an …

-

6 Creative Ways to Use Repeat Grids in Adobe XD

AdobeXD recently made a big splash during their announcement because of a few unique features, …

-

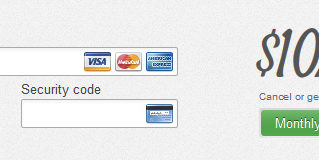

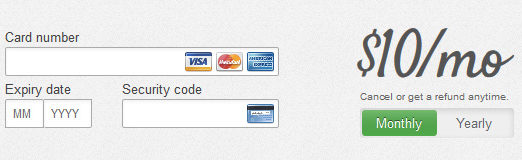

The Ultimate UX Design of: the Credit Card Payment Form

The Peak Point of eCommerce and SaaS – the Credit Card Payment Form If you’re …

-

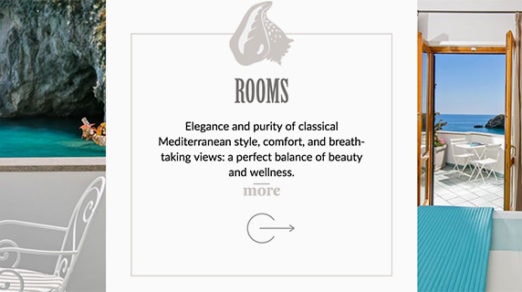

Best Practices of Hotel Website UX Design

User experience is an integral part of any website design and there is no doubt …

-

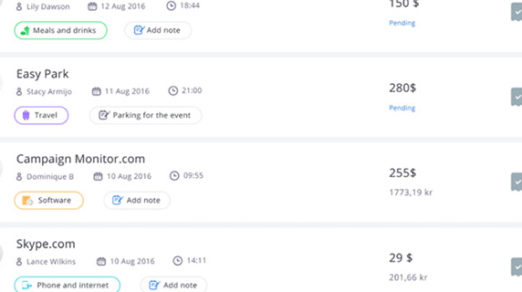

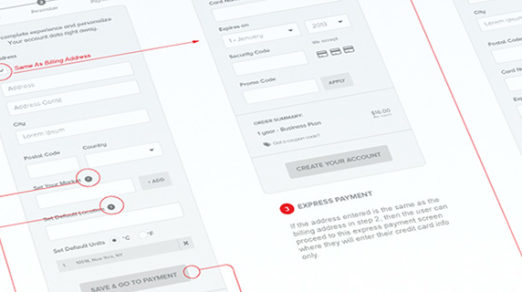

Web Design Usability Tips For Billing Forms

Each ecommerce site has its own checkout flow moving the user from a shopping cart to the …

-

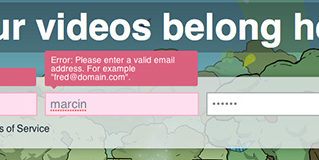

The Ultimate UX Design of: the Sign-Up Form

A typical sign-up form contains a couple of form fields (it seems like the most …

-

Intro Guide to UX Reviews for Web Designers

A great UX review can do wonders for any website. By looking over the entire …

-

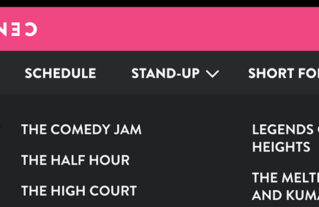

UX Design Tips For Dropdown Navigation Menus

Dropdown menus have come a long way thanks to modern JavaScript and CSS3 effects. But …

-

How Great Icons Can Affect The User Experience

Interfaces are all about communication and getting things done. A website’s UI is a means …

-

4 Ways to Improve Usability and User Experience by Decluttering Designs

We often speak about decluttering in the sense of physical stuff like closets or storage. …